CSC570 (Final Project):

Volumetric Reintroduction of Fine and Medium Details via Stereo Imagery

by Erik Nelson

Abstract:

-

I was fortunate enough to be a part of the International Computer Engineering eXperience (ICEX) this year, where I spent the last four weeks of the quarter traveling to Malta in order to reconstruct geometric models of cisterns for archaeologists at the University of Malta. My project was oriented towards this broader ongoing project, and used much of the software that we have already produced for the ICEX program.

|

|

|

Stereo Imaging:

-

The resolution on our SONAR generated cistern maps is poor, requiring alternative techniques for modeling fine- and medium-sized details that we find, such as stairs, archways, and rocks. To accomplish this, I set out to create disparity maps, or a 2D image containing depth information in its color channels. Disparity maps are generally made either through stereo imaging or object flow. I chose to use two stereo aligned GoPro cameras for this task. We purchased a waterproof housing and attached the stereo cameras onto the ROV during our deployments to capture sequences of stereo images.

For the results that I will display on this webpage I only processed images taken from out of water. Stereo imaging is inherently hard in water, but I have been able to produce some accurate disparity maps from features inside of the cisterns as well. Most of the image processing and initial disparity mapping was done in MATLAB and Photoshop, which both have freely available tutorials on the techniques used here. I began with some highly distorted left and right images of an archway taken with the GoPros alone (not attached to the ROV in this case). Here is the left stereo image:

Most disparity matching algorithms find features that are similar in both the left and right images to calculate a distance in pixel space between the two features. To do this, I needed images that (a) had features that could easily be identified and matched, and (b) had features that could be found by searching along horizontal epipoles. To meet requirement (a), I removed lens distortions and did some quick and dirty image processing filters in Photoshop. The following are the resulting grayscaled left and right images.

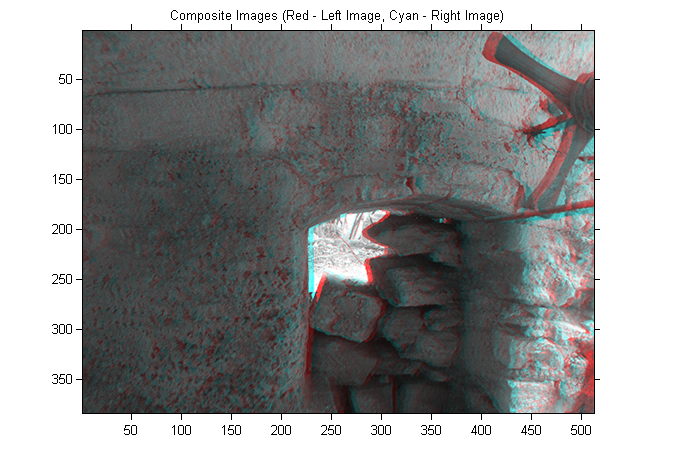

To solve (b) I needed to rectify the images such that a feature in one image will lie in the same horizontal plane as the same feature in the other image. Rectification is a complex task in itself, so I used some boxed up algorithms to do this. In MATLAB, I loaded the two images in and formed a composite red cyan image, with the red channel referring to the left image and the cyan channel referring to the right image.

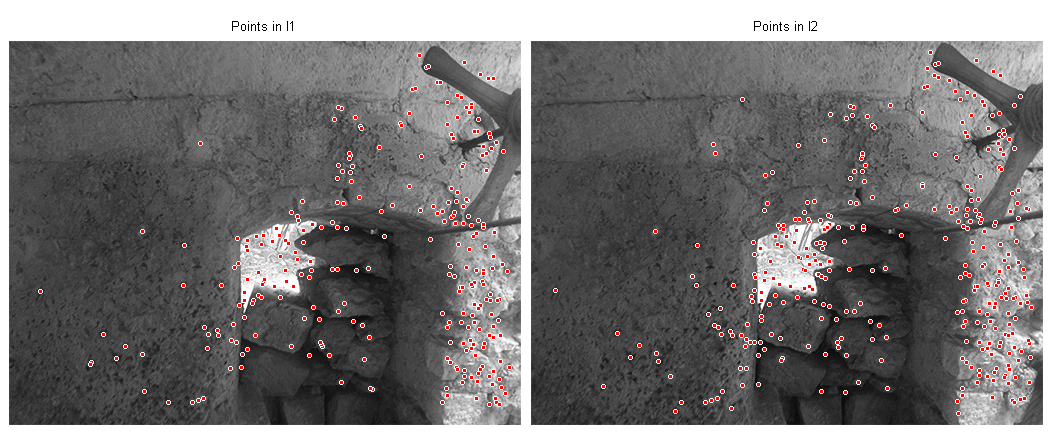

The next step in the pipeline is to identify 'significant features' in the image. I used MATLAB's computer vision toolbox to do this. Interestingly, for overwater images I could extract a large number of significant features with relative ease. However, for underwater images I had to set thresholds much lower. In the case shown here this step was quick and easy.

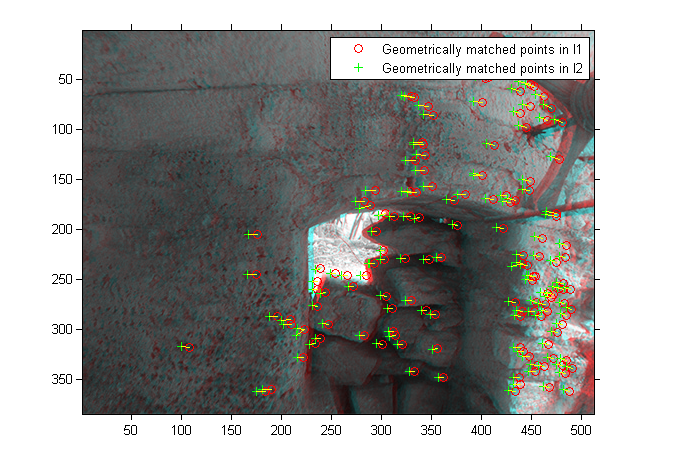

At this point, the features in the two images do not match up. There is no way to solve for an image transform unless there is a one to one correspondance between features, so I did a few steps to refine the points such that they matched between the two channels in the composite image.

With significant features matched, the next step was to apply a 2D transform on the image. RANSAC is one example of an algorithm that does this, and I used the MATLAB implementation to solve for my transformations.

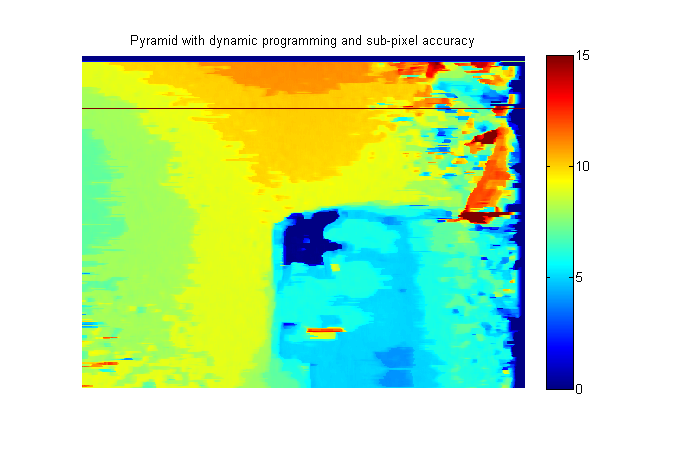

Once the images were rectified, it was straightforward to produce a disparity map. The map in this image is not scaled to meters in the real world, but can be calibrated using a checkerboard and a second disparity map.

The GIF in the top of this webpage is a textured visualization of the point cloud produced using this disparity map. All of the disparity maps that I produced from overwater and underwater images were used as the input to the second half of my final project, described below.

Volumetric Reintroduction of Medium-Scale Geometry:

-

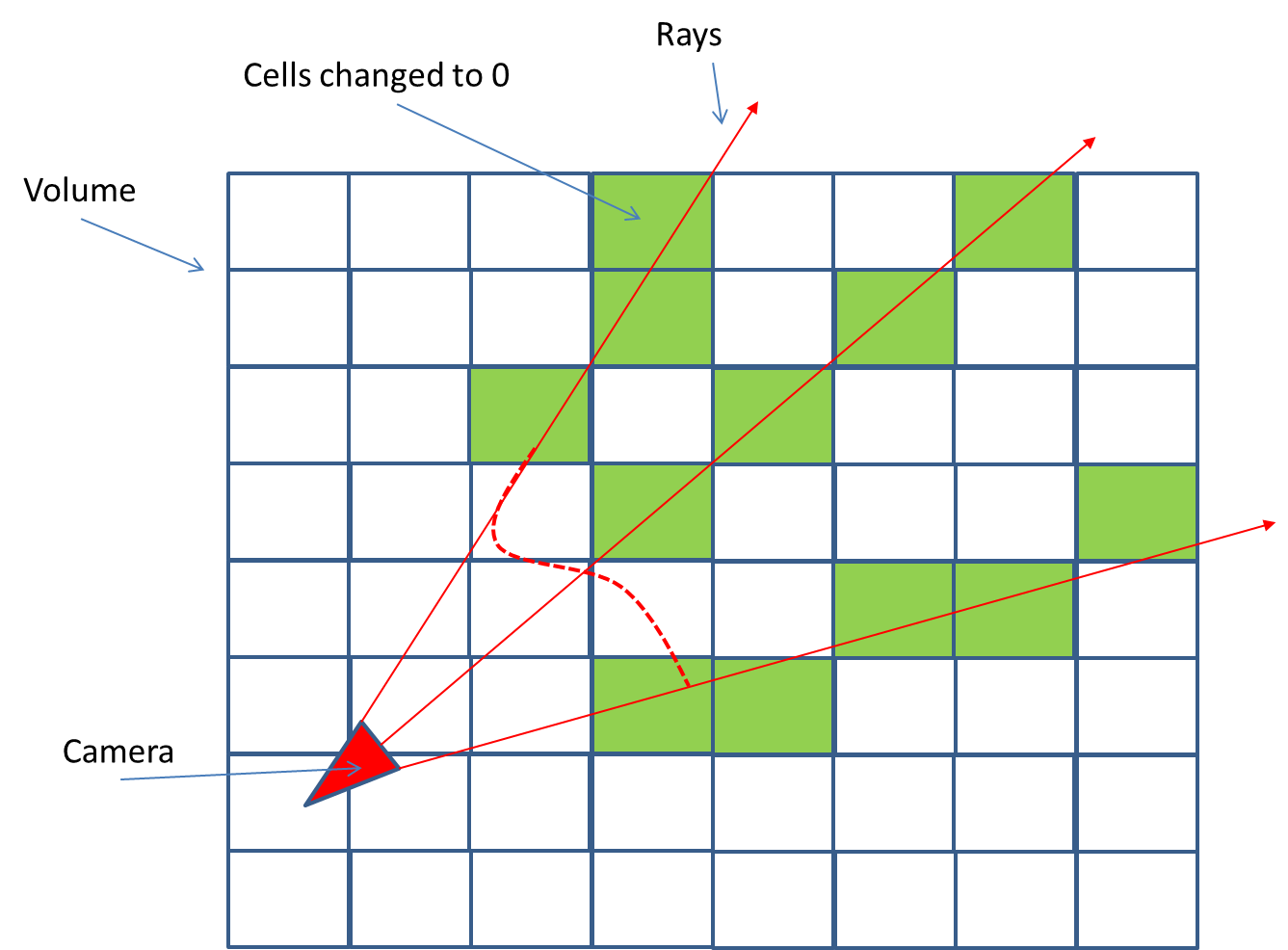

The second half of my project dealt with the reincorporation of fine- and medium-scale geometry obtained via stereo imaging during our ROV deployments. The idea was to find some way to affix the stereo data into the rough and dirty SONAR generated mesh, since SONAR has a much more limited resolution. After looking through a few ideas, I settled on something firm. As a preprocessing step, Jeff Forrester (also in the class) produces complete volumes of our cistern maps using the level-sets method. The new idea was to use projective texturing and raycasting to 'unoccupy' cells in the volume based on the disparity map, after which time I could re-run marching cubes on the volume and reconstruct some of the geometry present in the initial cisterns. Here is a graphic of the 'unoccupy'/volumetric raycasting stage of the algorithm.

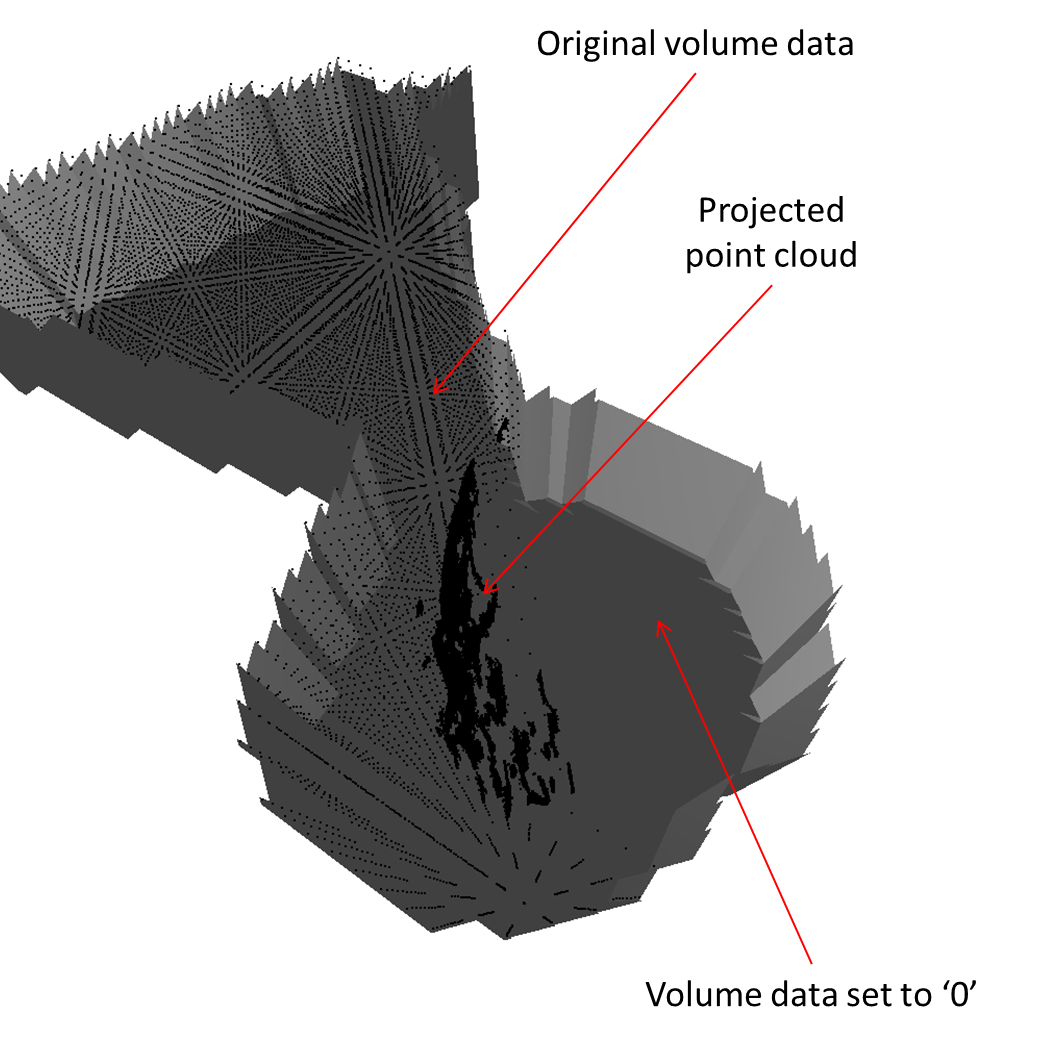

In this example, I just used a basic raycasting implementation where I stepped along the ray a certain distance rather than implementing something more complex like Bresenham's algorithm. I wrote some code to constrain the disparity map point cloud in a projector frustum, and then implemented this technique. In the following graphic, the gray mesh is the SONAR reconstructed mesh that we were previously dealing with, and the volume can clearly be seen in the background. The dense point cloud was a projected disparity map, whose points marked the threshold for volumetric occupancy resetting.

The disparity map for this image was an underwater disparity map of some rocky features that we produced during last year's ICEX trip.

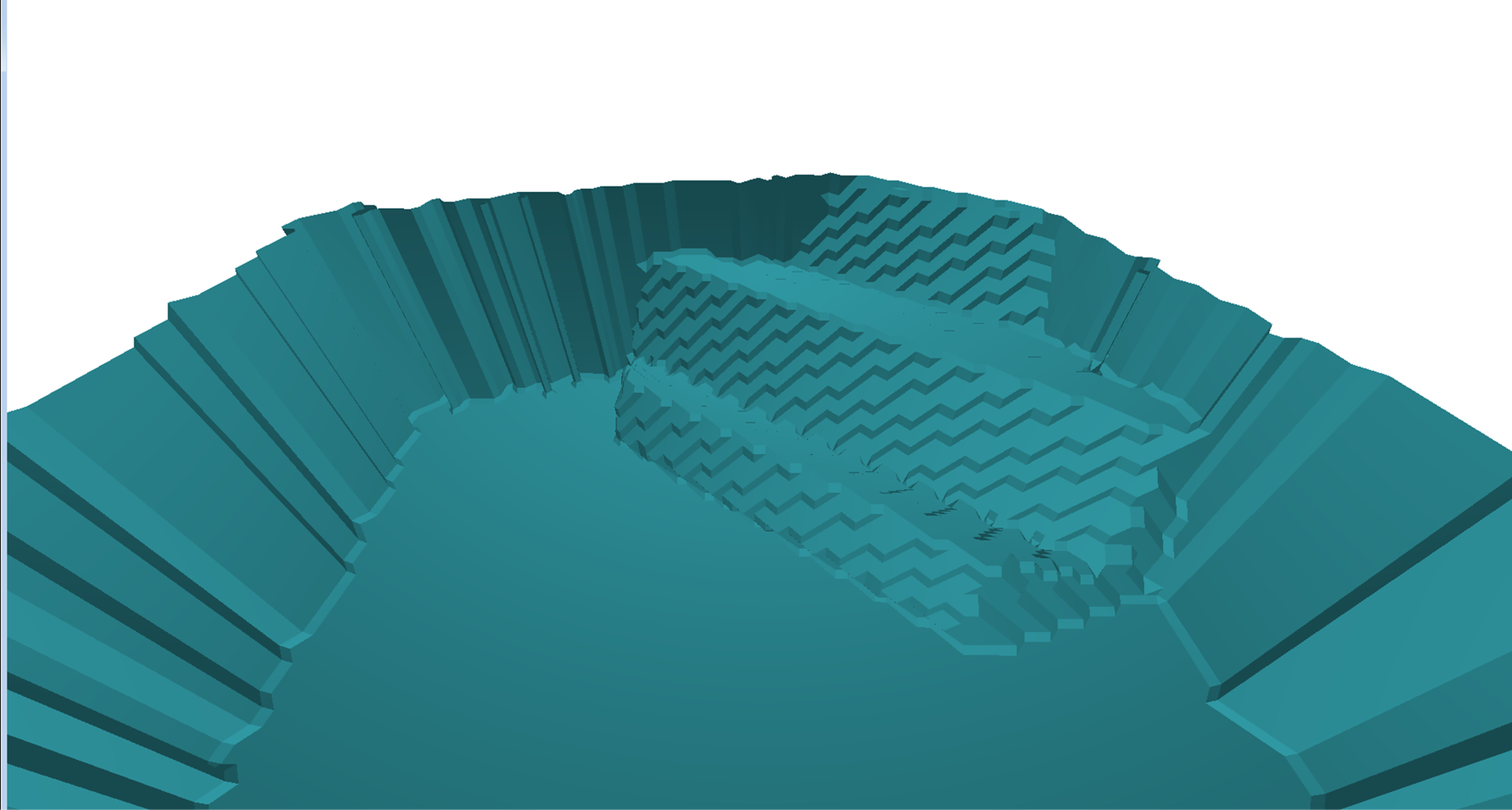

The above image is a poor example of the reintroduction of medium-scale details since it is a rocky wall. A more relevant feature might be stairs or an archway, which I demonstrated with a disparity map of stairs in a rectangular volume. After running marching cubes again on the volume, the stairs are clearly visible.

I am continuing to work on the resolution issue presented here, and will be working on my ICEX projects for the forseeable future.