Ray Tracing Volumetric Data

Chris GibsonCSC 572 - Dr. Zoe Wood

Description

The focus of this project was to take volumetric data supplied by the user (via some standard format) and visualize it using a ray tracer previously implemented in Advanced Rendering Techniques (CSC 473.) The project would involve researching the available volume data formats, implementing a spatial data structure to manage and optimize the use of the data, and implementing an algorithm to traverse the dense three-dimensional arrays in a cost-effective way while creating quality renders of the data.

Details

Volume Data

The volume data was gathered primarily from the University of North Carolina (see references section.) Each "slice" of the volume data was in a separate file, representing a horizontal slice of the data. Each file contained a two dimensional array of 16 bit values that represented the density (or, in this case, density) of each point.

Sample cross section "slice", visualized from black to white based on opacity/density.

Spatial Data Structure

An octree was chosen to manage the input data. Using an octree provided the renderer with a robust log n search, limiting the tests by scrapping entire quadrants of the grid as the structure was tested for intersections. In addition to better searching, the octree ignored all empty space, so entire sections of the tree were removed (or, more specifically, never added) to the tree when the volume data was loaded.

The octree contains two different types of nodes: branch nodes and leaf nodes. When the ray enters the octree, the branch node checks to see if any of its children were hit, and if so it applies that children's contribution to the running opacity value. This allows the opacity gathering function to be done during the recursive intersect steps. This means that the intersections that the ray tracer receives and handles are not guaranteed to be in order. In future modifications (involving shadowing and ambient occlusion within participating medias) the order will matter, and changes to the intersection tests will have to be made to accommodate this.

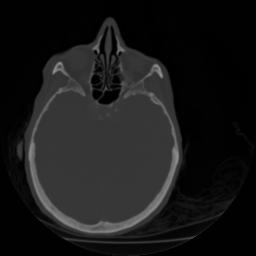

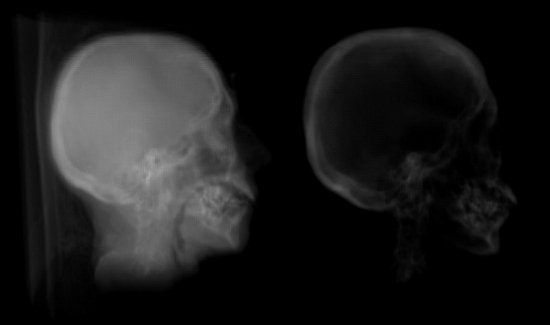

These two images were obtained from the same dataset. The image data was left unmodified in the image to the left, where the right image was stripped of less dense (less opaque) material.

Media

The following videos were generated from ray traced renders of volume data.References

- Stanford volume data archive

- Efficient ray tracing of volume data - By Marc Levoy

- Survey of Octree Volume Rendering Methods - By Aaron Knoll

- Ray Tracing Volume Densities - By James T. Kajiya and Brian P. Von Herson

- Display of sSurfaces from Volume Data - By Marc Levoy