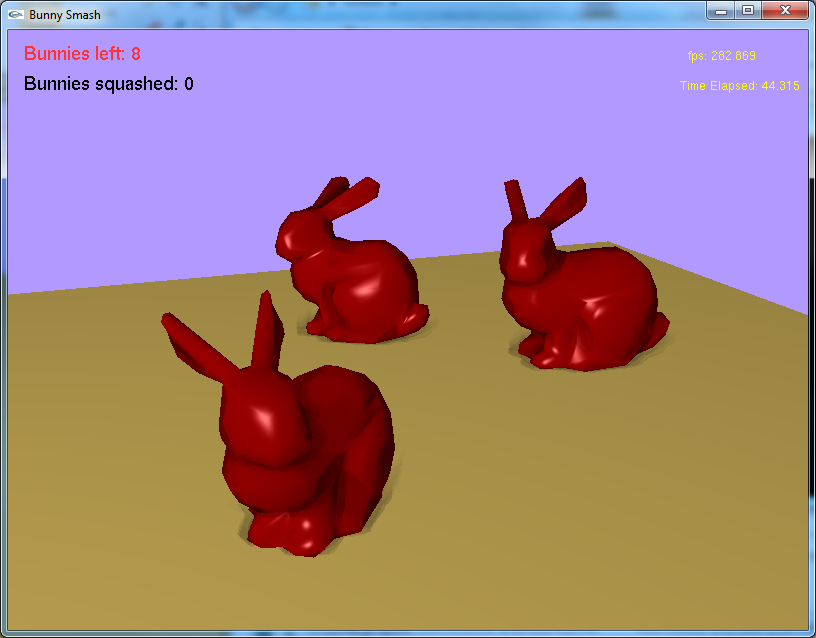

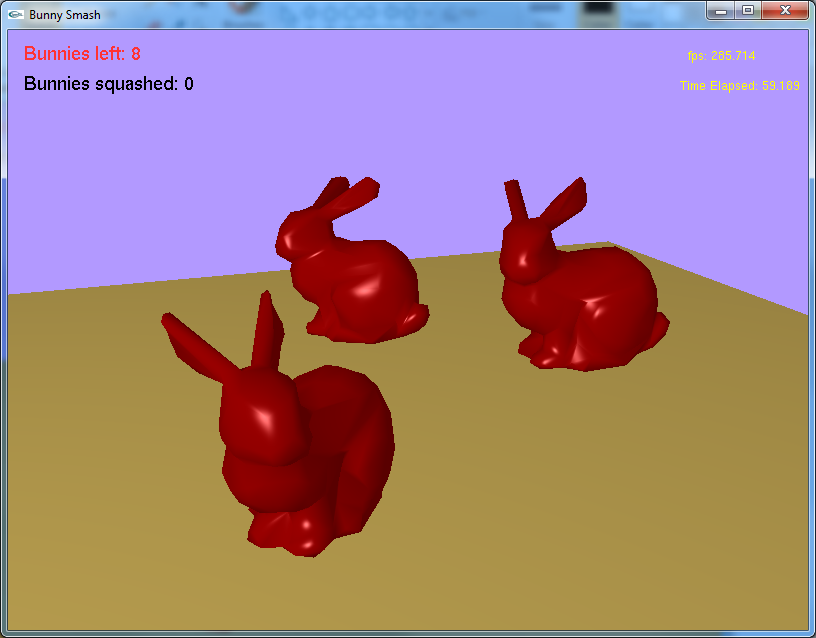

Bunnies with SSAO and Lambertian shading

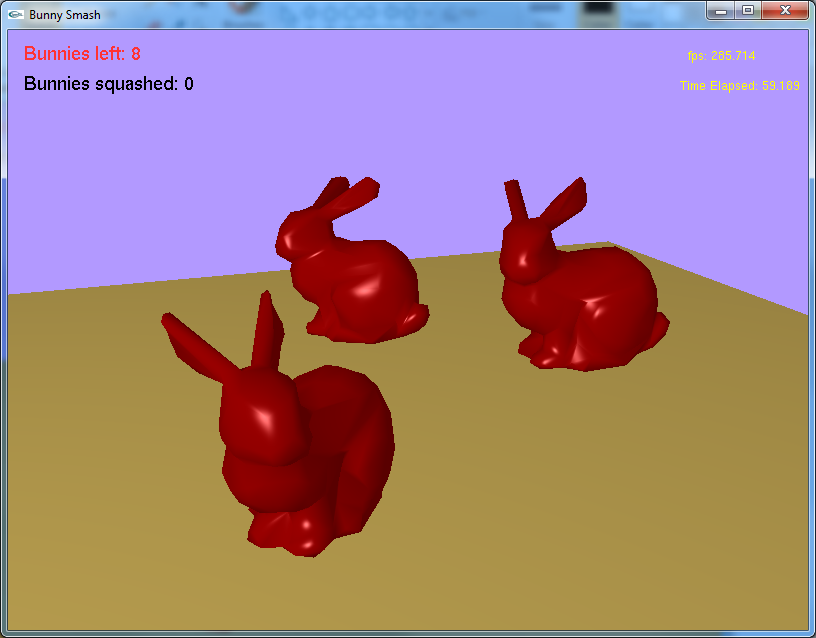

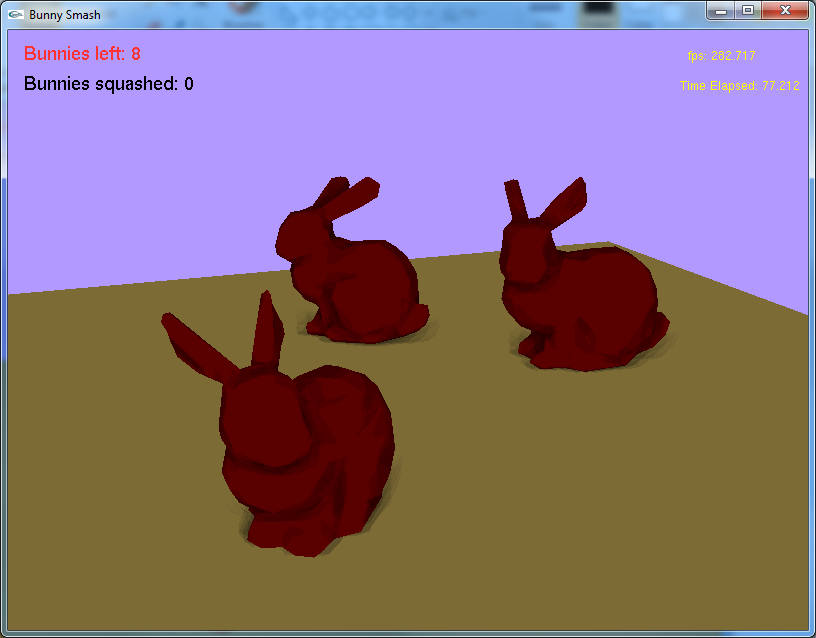

Bunnies without SSAO and Lambertian Shading

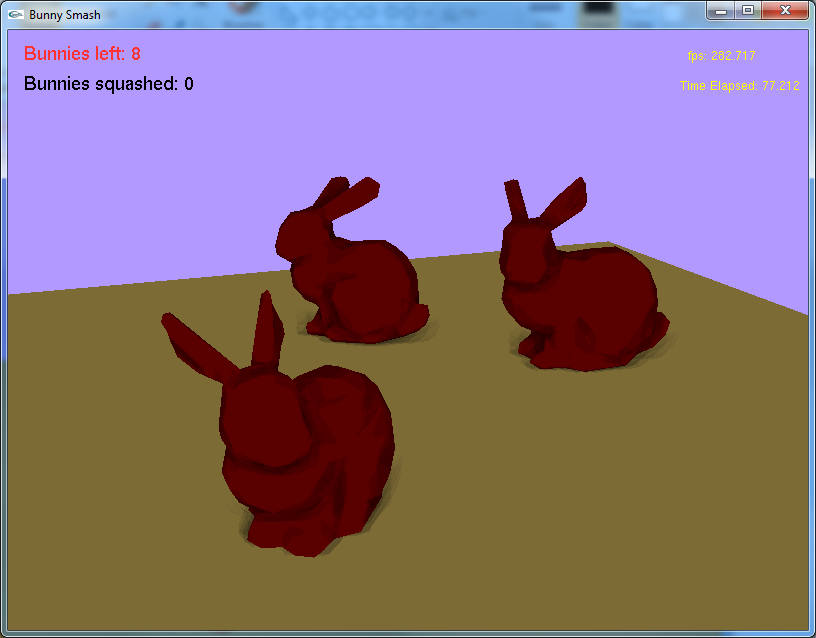

Bunnies with SSAO and only ambient light

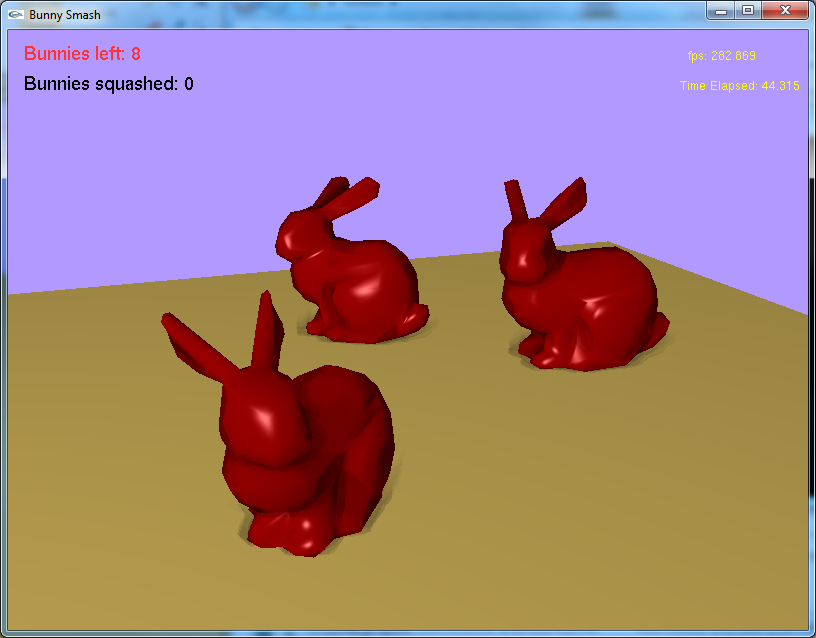

Bunnies without SSAO and only ambient light

CSC 572 (Fall 2008)

Global illumination techniques like radiosity and ray tracing generate high quality images that, because they attempt to model how light physically interacts with objects in the world, are very believable. However, one of the major drawbacks to these rendering approaches is their runtime requirements: up to hours in some cases.

In real-time graphics, scene's must be rendered in milliseconds, not hours. But it is still desireable to generate lifelike images, and so special techniques must be used in order to keep render time low, but at the same time push fidelity higher. Normal mapping is an excellent example of this because it allows simplified meshes to appear as if they have detail without the need for those fine polygons.

The technique presented here is called Screen Space Ambient Occlusion (SSAO). Ambient Occlusion by itself is an attempt to model how light is attenuated due to occluding geometry. It is considered a global method (i.e. it takes into account other geometry in the scene), but it is very crude compared to full global illumination techniques. Normally, Ambient Occlusion is performed with ray casting from the location of a pixel in world space; rays that do not intersect geometry increase the brightness of a surface, and those they do intersect do not contribute.

The ray casting of Ambient Occlusion is still a demanding computation, especially for enough rays to produce convincing results. Therefore the SSAO technique, first used by the 2007 game Crysis, was introduced to perform a similar task, but in a new, geometry independent manner. SSAO is implemented purely on the GPU in a fragment shader (thus the term screen space is used), and in its simplest form, uses only the depth buffer to make judgments about occlusion at a pixel. Neighboring pixels are depth sampled and an occlusion term is calculated based on the differences in depth. This occlusion term is used to modulate the ambient term in a local reflection model.

Strengths of SSAO:

Disadvantages of SSAO:

Two passes are required for this algorithm. In the first pass, textures are generated for use within the fragment shader. In the second pass, the scene is rendered to the screen using the programmable pipeline in order to implement the SSAO.

In this implementation four textures are required containing the following information: depth and normals for each pixel, as well as a random texture (in the red and blue channels), and finally a one dimensional texture representing the occlusion function.

In the fragment shader nine depths are sampled; one for the current pixel, and eight more for comparison. The locations of the eight neighboring pixels are generated semi-randomly by sampling the combination of cardinal directions and intercardinal directions at varying offsets based on the values obtained from the random texture.

Next, the depth difference between the current pixel and each of it's neighbors is calculated. This value is normalized using a maximum defined occlusion distance and used as a lookup coordinate into the occlusion function texture, which returns a floating point occlusion term in the range [0,1], with 0 representing no occlusion and 1 representing full occlusion. However, if the surface normals, acquired from the normals texture, are too similar, then the occlusion term is discarded. This prevents near planar surfaces from occluding themselves.

Finally, the arithmetic mean, m, is calculated for all occlusion values, and is used to modulate the ambient term, a, of a Lambertian shading model using the equation a*(1-m).

Overcoming the hurdles associated with learning the programmable function pipeline was my biggest issue. Having never written a shader, I relied on internet tutorials to learn the basics. After having implemented basic Lambertian and toon shaders, the next hurdle was learning how to implement SSAO.

There aren't any white papers on SSAO that I could find. The closest I could get was a paper written by Dominic Filion and Rob McNaughton at Blizzard for SIGGRAPH 2008, entitled "Starcraft II: Effects and Techniques," [Filion] which provides an overview for their technique and addresses some of their SSAO artifacts.

In the end I chose to implement my own algorithm, borrowing the occlusion function from Mr. Filion and Mr. McNaughton, and the random sampling from a colleague, Mr. Chris Gibson. I felt the technique described in [Filion] was above and beyond, and that a simpler approach could still produce convincing results. Their more thorough approach still produces better results in my opinion, however I'm satisfied with the results I obtained using only eight samples and limited development time.

The currently implementation is rather basic, and it would be useful to add and experiment with additional augmentations to the algorithm.

First, I would like to improve the random sampling. Currently the sampling is quite uniform (an artifact of my first, entirely uniform implementation), and a better random scheme could improve the approximation by reducing patterns created by more uniform sampling methods. Additionally, more samples could be used to acquire a more accurate approximation.

Another improvement would be blurring the results using a smart Guassian blur, as outlined [Filion]. The "smart" aspect of the blur would be to avoid bleeding occlusion into other objects (i.e. reduce the Gaussian weight to zero if the depth difference or surface normal difference of a sample is too great). This would require the occlusion terms to be stored in a texture as well.

Lastly, there are many internal variables that can be altered to produce results of varying believability; there is much work to be done deciding on the values of these variables for a given scene. For example, the maximum offset distance for depth texture lookups can give a more local or wide sampling area; this produces smaller or larger shadowed areas. Another important variable is the maximum depth difference (corresponding to the maximum value defined in the depth function). Because the occlusion function returns zero occlusion for negative depth differences (occluding surface is behind the fragment) and for depth values above a certain threshold (surfaces too far apart for there to be occlusion), adjusting that threshold is paramount to creating convincing images. Also, the occlusion function itself can be adjusted to define exactly what occlusion values should correspond to what depth differences.

Filion, Dominic, and Rob McNaughton. "5.5 Screen-Space Ambient Occlusion." Starcraft II: Effects and Techniques. Proc. of SIGGRAPH 2008. Web. 10 June 2010. <"http://ati.amd.com/developer/siggraph08/chapter05-filion-starcraftii.pdf">.

Bunny Smash with SSAO - unZip and run '572_final_proj.exe' under Windows.

Commands: