Bob Somers CSC 572 Winter 2011

Kids these days...

2D just isn't good enough for these young whippersnappers. They want their movies and games coming out of the screen at them. Well, thankfully NVIDIA has a product on the market that caters just to them. The 3D Vision kit contains a pair of active-stereo shuttering glasses (explained below) and an IR transmitter to synchronize with the display.

Unfortunately, the part that's missing is OpenGL and Linux support. On their GeForce video cards, 3D Vision is only supported under Windows/DirectX, catering directly to the gaming market. However, there isn't any obvious technical reason why it shouldn't work under OpenGL and Linux. My goal was to set out and see how feasible it was to reverse engineer it and get it working.

To clarify, NVIDIA does support OpenGL and Linux with their professional line

3D Vision kits and graphics cards (the Quadros). However, since our labs are

outfitted with GeForce GTX 470 cards, simply requesting a GL_STEREO

buffer and drawing into GL_BACK_LEFT and GL_BACK_RIGHT

was out of the question, since there is no explicit driver support.

Stereo Background

The basic idea behind stereo is that if you can deliver a slightly different image to each eye, you can use parallax to create the illusion of objects at depths other than that of the medium the image is displayed on. Generate stereo pairs of images has been a solved problem for a long time, but delivering those images separately to each eye has been an active field of research. Only recently has the technology improved enough to the point where stereo is starting to become more mainstream rather than just a gimmick.

Anaglyph Stereo

When people think of "3D", they traditionally think of anaglyph glasses. The technique relys on using different colored lenses as filters to separate the left and right eye images. While anaglyph is the easiest and cheapest method of solving the problem, they also introduce a lot of problems with correctly representing color in the stereo image. As such, it's not an acceptable method for modern media.

Polarized Stereo

This is the technique used in most 3D films today. The left and right eye images are polarized in opposite directions, and each lens contains a polarization filter to cancel out the opposing image. First vertical and horizontal polarization was used, but caused problems when the viewer's head was not perfectly aligned with the screen. Modern cinemas use circular polarization (developed and marketed by RealD) where the left and right eyes are polarized clockwise and counterclockwise.

Active Stereo

Active stereo is becoming popular in home theater settings due to the nice balance between cost and image quality. Active stereo relies on shutter glasses that can control which eye is open and which is blocked. The glasses have electronics inside which synchronize with the display (usually using an infrared signal) to alternate left and right eyes. This requires the display to have a higher than normal refresh rate — usually at least 120 Hz — so that each eye can be delivered images fast without introducing eyestrain. NVIDIA's 3D Vision kit is an active stereo system.

System Setup

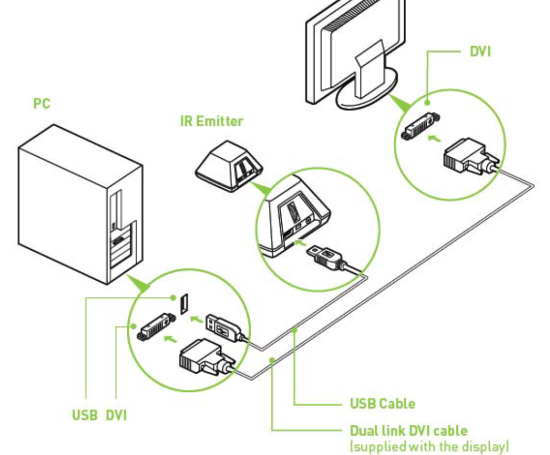

Hooking Up the Equipment

Connecting everything is relatively straightforward. You must be using a display capable of 120 Hz refresh rates (in the lab we use the Alienware OptX AW2310). You should also install the NVIDIA proprietary video drivers, available from NVIDIA's website. The README on their site is comprehensive, but in a nutshell you need to:

- Download the

.runself-extracting driver andchmod a+xit. - In Fedora 14, you need to blacklist Nouveau (the open source NVIDIA driver) from

loading by adding

rdblacklist=nouveau nouveau.modeset=0to the end of yourkernelline in/boot/grub/grub.conf. - Boot the system into run level 3 and verify no video drivers loaded. You

can do this by editing

/etc/inittaband changing the default run level from...:5:...to...:3:..., then restart the system. The text should be chunky and big if everything worked right. - Run the

.rundriver installer and follow the on-screen prompts. When asked to create anxorg.conffile automatically, say yes. - Following the same process above, reset your default run level from 3 to 5 and reboot the system.

- If all went well you should have the NVIDIA proprietary drivers installed.

You can verify this by running

glxgearsand marveling at your high frame rates.

Lastly, make sure you connect the USB emitter from the 3D Vision kit to a free USB port. We'll configure this more in a bit, but for now you just need it plugged in. It should be glowing red (meaning it's not initialized). Also, your 120 Hz monitor will probably require a dual-link DVI cable, so make sure you're using one.

Driver and Refresh Rate Configuration

At this point, if you load up the NVIDIA Driver Settings program (by either

launching it from System > Preferences > NVIDIA X Server Settings or

by running nvidia-settings on the command line), you'll notice that

your display is still running at 60 Hz. We need to bump up the refresh rate

manually as well as turn on Vsync for OpenGL applications.

First, load up the NVIDIA settings utility and select the DFP display under your GPU on the left. You should see an option called "Force Full GPU Scaling". You need to disable it to push the refresh rate higher, so uncheck it.

Next, go back to the X Server Display Configuration tab and select the native resolution of your display and a 120 Hz refresh rate explicitly. Do not use the auto option, as it will not choose this automatically.

Lastly, check out the OpenGL tab and verify that Vsync is enabled for OpenGL

applications. You can check this by running glxgears. It should warn

you that Vsync is enabled, and render 120 frames per second if everything is

set up correctly.

There are a couple of important gotchas when setting this up:

- For whatever reason, sometimes the driver will not set the refresh rate

correctly on boot. It will tell you it's 120 Hz, but running

glxgearswith Vsync on to verify this will show that it's still 60 Hz. Just launch the NVIDIA settings program and you'll see the screen flicker. That's the driver saying "ohhh... haha, yeah ok, you caught me". Once the settings program loads you can just exit out and reverify that the refresh rate is now correctly 120 Hz. - DO NOT run a fancy window compositor (like Compiz) when

doing any 3D vision work. It will interfere with the driver's ability to

actually refresh at 120 Hz. You will spend hours of your time wondering

why everything (include

glxgears) reports a 120 Hz refresh rate, yet the screen isn't actually updating that fast. If you have "Desktop Effects" or anything of that nature enabled... turn it off. Use plain old Metacity and you'll be fine.

USB Emitter Mount Permissions

Last but not least, our custom software needs to write to the USB emitter to control synchronization with the shutter glasses. By default, Linux will mount unknown USB devices with read-only permissions for regular users. If we leave it like this, you'll need to be root to run your 3D applications.

We can fix this by adding a udev rule that matches the NVIDIA

3D Vision IR emitter and mounts it with read-write permissions for everyone.

There's really no danger in doing so, and it lets normal users run 3D applications

without root access.

In your /etc/udev/rules.d/ directory, create a new file named

98-nvstusb.rules with the following contents:

# NVIDIA 3D Vision USB IR Emitter

SUBSYSTEM=="usb", ATTR{idVendor}=="0955", ATTR{idProduct}=="0007", MODE="0666"

You can also grab this file directly from the GitHub project if you prefer.

At this point you should be able to pull down and compile the 3D Vision demo

program which I wrote for this project to test if everything is set up correctly.

You can grab it from the project page

on GitHub. You'll need libusb-1.0 to compile it, which you can install with

your distro's package manager.

Reverse Engineering

Perhaps the most import part of determining if this project was doable was reverse engineering the USB protocol used control the IR emitter. If I could figure out how it works, I can duplicate those packets from Linux and nobody would be the wiser. To start analyzing packet traces, I used the free trial of USBTrace from SysNucleus. It was very straightforward to use, and I would highly recommend it to anyone needed to do regular USB bus analyzer work.

So how did I capture the packets? I hooked everything up on a Windows system and launched a DirectX application that uses 3D Vision with USBTrace running in the background. The trial version of USBTrace limits you to collecting only a few hundred KB of data at a time, but that was plenty for this project.

Figuring Out Initialization

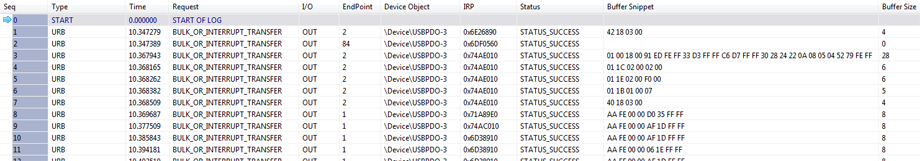

Most devices have some sort of initialization process before they are ready for regular communication. By monitoring just the USB emitter, I was able to weed out all other USB traffic and focus just on the very first couple bus transactions in USBTrace.

I did several captures to isolate static and dynamic data in the bus transactions. Luckily, everything in the initalization sequence seemed to be static. In the screenshot below, packets 1-7 appear to be the initialization sequence, because packets 8 and onwards repeat forever.

The main parts of interest here are the request type, endpoint, buffer contents, and buffer size. There are several different types of USB bus transfers, each with different properties like bandwidth or latency guarantees, but bulk transfers are the simplest and most common for general data. Each device can have up to 32 "endpoints", which you can basically imagine as talking to different components inside the device. To reconstruct these packets with our own software under Linux, we need to know what bytes are sent to what endpoint, and in which order.

The initialization sequence seems to be consistent every time, with the following byte sequence (all byte sequences are in hex):

- Send 4 bytes (

42 18 03 00) to endpoint0x2. - Send 28 bytes (

01 00 18 00 91 ED FE FF 33 D3 FF FF C6 D7 FF FF 30 28 24 22 0A 08 05 04 52 79 FE FF) to endpoint0x2. - Send 6 bytes (

01 1C 02 00 02 00) to endpoint0x2. - Send 6 bytes (

01 1E 02 00 F0 00) to endpoint0x2. - Send 5 bytes (

01 1B 01 00 07) to endpoint0x2. - Send 4 bytes (

40 18 03 00) to endpoint0x2.

The guys working on libnvstusb (mentioned below) seem to have reversed this a little further, figuring out that these are commands to load particular values into the timers on the onboard microcontrollers.

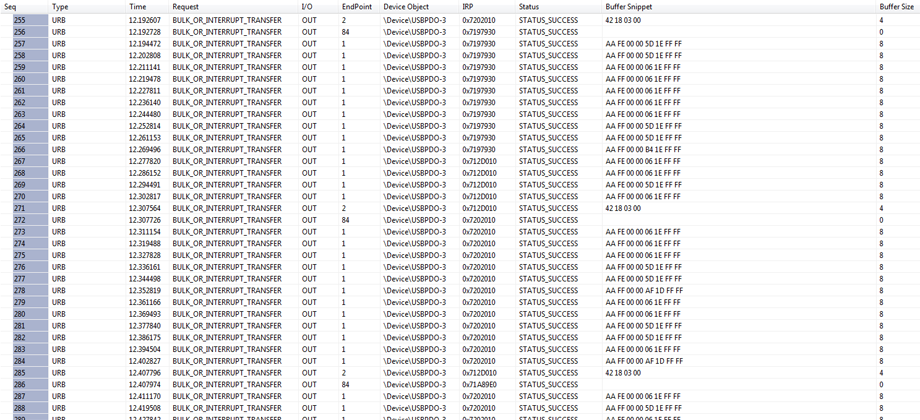

Repetitive Packets

From here we begin to see a long string of repetitive packet patterns.

Of particular interest is the time delta between all the packets sent to

endpoint 0x1. Let's examine a few of them.

| Sequence Number | Time | Δt from Previous Packet |

|---|---|---|

| 258 | 12.202808 s | — |

| 259 | 12.211141 s | 8.333 ms |

| 260 | 12.219478 s | 8.337 ms |

| 261 | 12.227811 s | 8.333 ms |

| 262 | 12.236140 s | 8.329 ms |

| 263 | 12.244480 s | 8.340 ms |

Awesome! A 120 Hz refresh rate has a period of 8.333 ms, so it looks like those are the packets that are synchronizing our glasses. If we look even closer at the packet data, we find further evidence of this:

| Sequence Number | Δt from Previous Packet | Packet Data |

|---|---|---|

| 258 | — | AA FF 00 00 5D 1E FF FF |

| 259 | 8.333 ms | AA FE 00 00 06 1E FF FF |

| 260 | 8.337 ms | AA FF 00 00 06 1E FF FF |

| 261 | 8.333 ms | AA FE 00 00 06 1E FF FF |

| 262 | 8.329 ms | AA FF 00 00 06 1E FF FF |

| 263 | 8.340 ms | AA FE 00 00 06 1E FF FF |

It appears that the last bit in the second byte is controlling which eye is shuttering. If we compare these sync packets over a much wider sampling and across multiple captures, the following pattern begins to emerge:

- The first byte is always

0xAA. - The second byte always alternates between

0xFEand0xFF. - The third and fourth bytes are always

0x00. - The fifth and sixth bytes are a mystery.

- The seventh and eighth bytes are always

0xFF.

Those mystery bytes don't seem to have a dramatic effect on generating our own packet sequence. Some people around the tubes have speculated that they might be timer offsets. They might also be some form of checksum, but that seems unlikely since the next packet arrives just 8.333 ms later and completely invalidates the state of any prior packets. Plus, you'd expect to see the same checksum on identical packets.

Enter libnvstusb

At this point I had done my own tests to verify that replicating this packet pattern in Linux using libusb did indeed make the glasses shutter. However, after going to all this effort I stumbled across a brand-new open source project named libnvstusb.

They came to all the same conclusions I did, regarding packet contents, except that they had gone a few steps further in identifying the onboard microcontrollers and figuring out the timer load instructions in the initialization header, as well as reverse engineering the button and scroll wheel on the IR emitter itself. They also had support for the other two refresh rates which the 3D Vision kit supports, 100 Hz and 110 Hz.

In addition, their implementation fixes a fatal flaw with mine, which I didn't notice because I was lucky. The onboard microcontroller has no permanent flash memory, so the firmware is loaded every time it's powered on by the NVIDIA driver in Windows. The Linux driver doesn't load the firmware, but I hadn't noticed because after using it successfully in Windows (where the firmware was loaded), I rebooted into Linux. Since the device was never powered off, it never lost the firmware. The libnvstusb implementation contains functionality for loading the firmware onto the USB emitter.

For all these reasons, I opted to move forward with their code base instead.

Software Tweaks

Eye Management

Controlling delivery of the correct image is handled by running OpenGL apps

with Vsync enabled. A well-behaving driver implementation will cause

glutSwapBuffers() to block until the display has actually swapped

buffers, releasing control flow back to the application. The app can then use

glutSwapBuffers() as a crude synchronization mechanism, however this

requires that total update and draw time fits within the 8.333 ms window. Potential

ways of removing this restriction are discussed in the future work section.

With the eyes swapping every frame, it's just a matter of doing the correct camera projection based on whether we're showing the right or left eye. There are two methods for generating stereo pair images from a single mono camera, both of which are implemented in the stereo_helper.h file from the demo project.

Stereo Pairs with "Toe-In"

The toe-in method works by splitting the mono camera into two virtual cameras with eye positions to the left and right of the origin along the camera's U basis vector. The focus point of each eye remains the same, and a regular perspective projection frustum is created for each.

This is the most straightforward method, since from a programming

perspective it's just a matter of shifting the eye position along the U vector

and calling the familiar gluPerspective() and gluLookAt()

functions. However, it can be uncomfortable to look at for long periods of time,

specifically because the projection planes don't line up for each camera. Objects

in middle of the screen will be fine, but as the camera's aperature gets larger

there will be more distortion near the edges of the image, causing viewer

discomfort.

Stereo Pairs with Parallel Axis Asymmetric Frusta

Parallel axis asymmetric frusta is generally considered to be the "correct" way to do stereo in real-time. The basic idea is that both eyes maintain parallel view directions, so their projection screens line up.

However, to accomplish this you cannot use gluPerspective(), because

the frustum for each eye is not symmetric. Finally we have a reason to use

glFrustum()! Paul Bourke's site has lots of excellent information

on the math required to create the required asymmetric frusta.

Because the eyes maintain their parallel viewing directions, there's no distortion of the image between the eyes and it leads to much more comfortable viewing.

Results

It's pretty difficult to show a video (or even a proper screenshot) of this effect in action, since you'd need some kind of stereo system to view it properly. However, here are some screen captures from the demo application which show the left eye, right eye, and both images. When viewed without glasses on, you'll see the both images.

Note that for the demo, the focal length of the camera was such that it pushed things out of the screen into negative parallax. This is generally bad practice, as some people experience motion sickness or other discomfort with extending viewing of negative parallax images. For best results, most everything in your 3D scene be at zero parallax (screen depth) or positive parallax (going into the screen).

The code the project demo, including everything necessary to compile and run, can be found on GitHub. You'll need the libusb-1.0 development package installed to build it, and probably some X11 development packages as well.

Future Work

At this point, the demo application must be capable of rendering 120 frames per

second to keep up with glutSwapBuffers() and stay synchronized on the

correct eye. One nice feature of having actual driver support is that you can

just draw into a left and right back buffer and the driver will handle swapping

the left and right front buffers automatically every 8.33 ms.

We can't perfectly replicate that, but we could simulate it using frame buffer objects in OpenGL as our virtual stereo back buffers. With a little multithreaded OpenGL voodoo, our main thread would be focusing on putting the correct FBO front buffer (left or right) into the actual back buffer, while the application is happily drawing away into the left and right back buffers.

This is less efficient, since at the application level we either have to render a fullscreen quad into the buffers to swap them, or manually copy the pixel data over. In addition, we're now drawing potentially 3 frames ahead of what the user is actually seeing. (Back FBO => Front FBO => Back Buffer => Front Buffer)

There are also some stability and quirkiness issues with libnvstusb

that remain to be solved. On my test setup, if the OpenGL window was dragged

around too much it would cease updating the USB emitter. Also if the window was

too big, I would starting seeing color undulation, which is probably related to

not being perfectly synchronized with the display. Lastly, there was still some

noticeable ghosting at the top of the screen, again probably due to imperfect

synchronization.