Point Based Approximate Color Bleeding

Katelyn Hicks

CSC 572 - Graduate Graphics

Dr. Zoe" Wood

Spring 2015

|

|

Description

What is a surfel cloud?

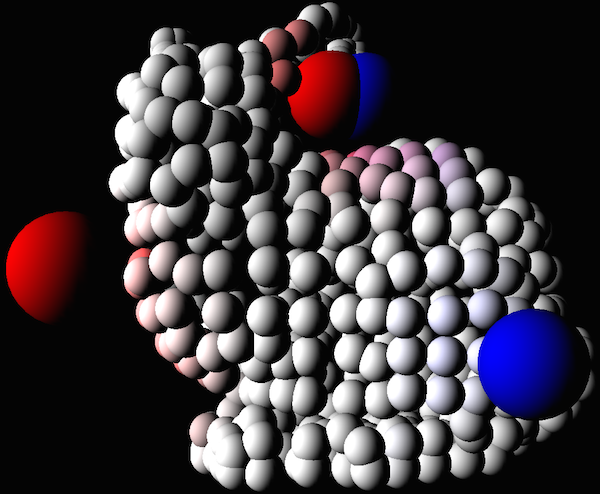

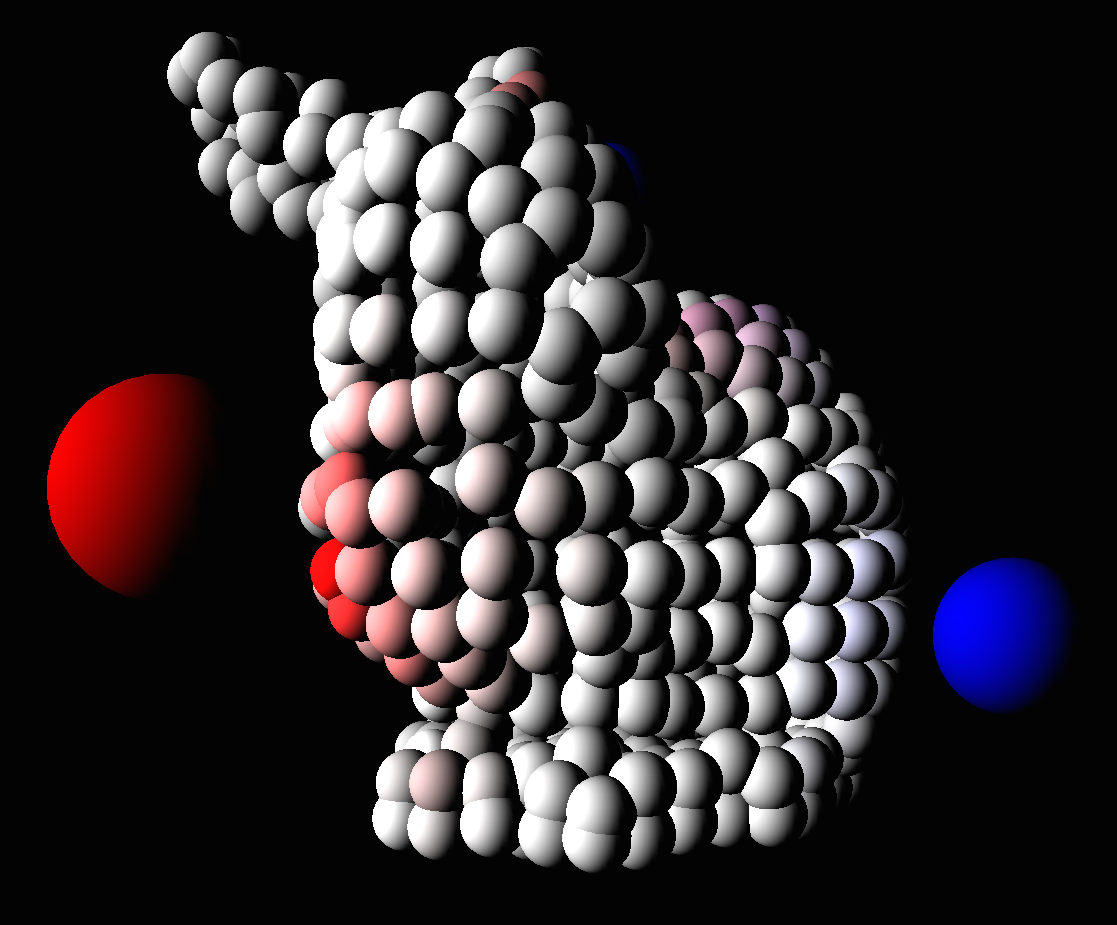

A surfel cloud is a different way to represent a surface, similar to a point cloud. Each surfel is a disk that has a position, color, normal, and radius. Surfel clouds are instead of a polygonal mesh with surfels that overlap to create a mesh.

Surfel clouds are used in point-based approximate color bleeding to represent the direct illumination of the scene. This cloud is queried for the N closest surfels to a given point and used for approximating the color that will bleed on to the given point.

200,000 surfels in input cloud.

Creating surfel point cloud

A preprocessing step is required before rendering with point-based approximate color bleeding. A surfel cloud must be created for the given scene, creating a cloud of these discs that represent the direct illumination (including shadows) of surfaces in the scene.

This surfel cloud was created through ray tracing the scene prior to rendering the scene. The surfel data is saved to be queried by each point that will be rendered. Due to time constraints, my surfel cloud was saved in arrays, but future work for this project includes saving the cloud in an octree. This data representation will prove to be more efficient since the data is organized according to the position of the surfel and we are going to be querying for the N closest surfels to a point.

The number of surfels greatly affects how much color is bled onto the surface points being rendered because it determines how much surrounding color information there is for a given point.

Calculating color bleeding

For each point that will be rendered, the surrounding surfaces need to be considered to accurately render the color that is bled to the given point. We use the input of the surfel cloud in order to find the N closest surfels to the current intersection point on the surface. In the algorithm, the chosen surfels will be used to render a cube map of color that surrounds the point, similar to an environment map. This will be used to determine the color that bleeds on to the point based on the surface's normal.

In my implementation, it is a very approximated color due to the fact that it is calculated that surfels within a radius distance from the intersection will contribute to the color bleeding for that point. This will show that for a given surface point where there are a lot of surfels, the color bleeding will be much stronger than a point in a region of space that has very little surrounding surfels.

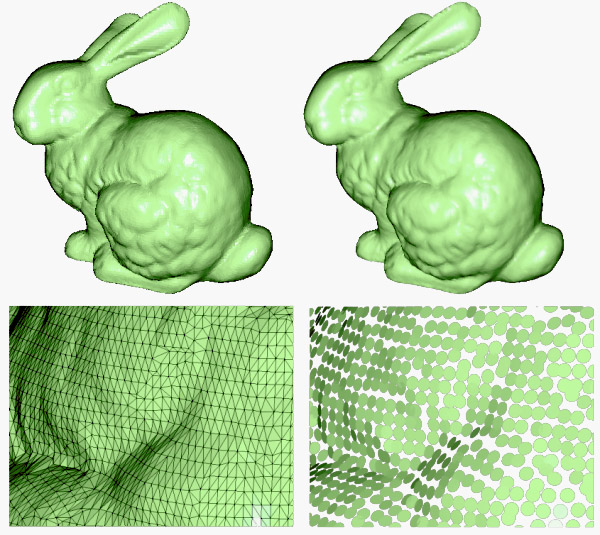

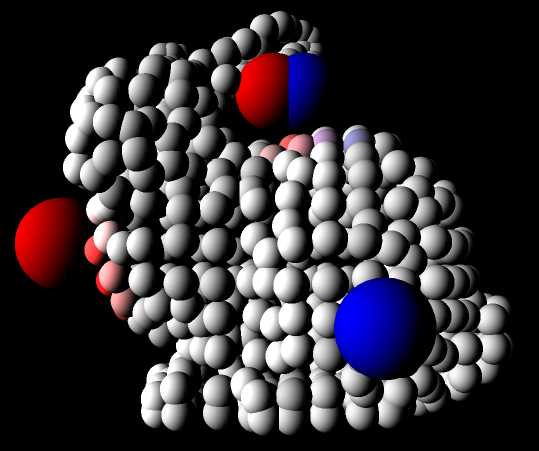

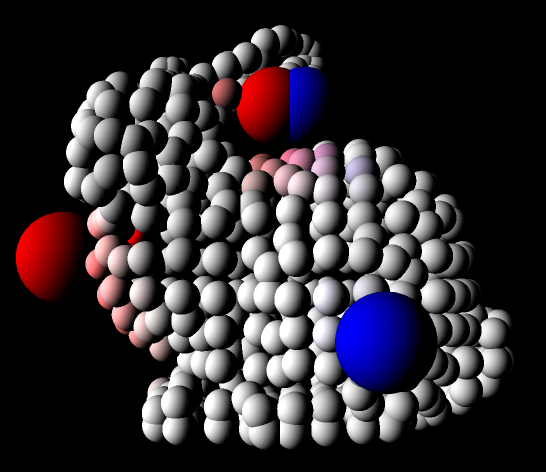

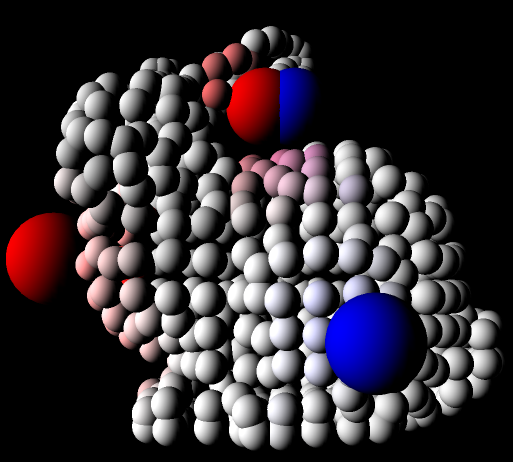

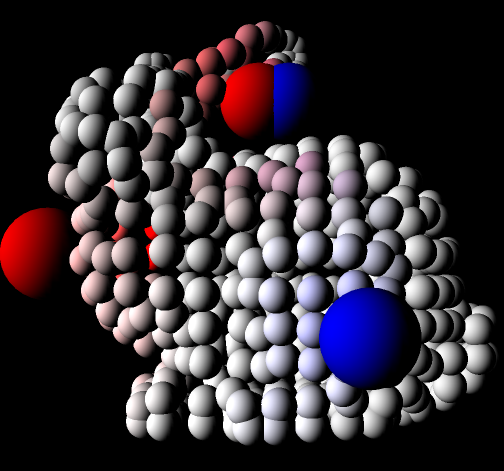

Depending on the radius used to find the closest surfels, more or less color will be bled onto the current point. This can be seen in the images below which use different values for the radius when looking up the color bled.

|

|

|

|

Top Left: bleed radius 0.5

Top Right: bleed radius 1.5

Bottom Left: bleed radius 2.5

Bottom Right: bleed radius 3.5

Future Work

-

If I had more time to work on this project, I would first work on representing the point cloud as an octree. This would require first building the octree and for each parent node, calculating the aggregate power and area of its children. This is calculated using spherical harmonics to find the blended color of its children, which would be used for when we want a more approximate color for a given number of surfels. This approximate is important for surfels that are further away.

-

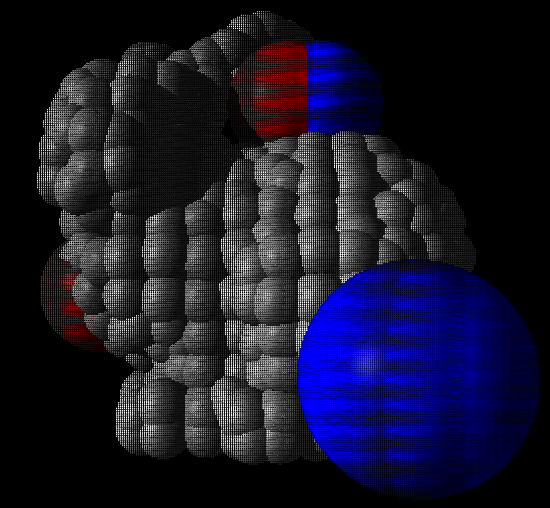

One design choice I made early on the project was that I wanted to use the OpenGL rasterizer in order to render, rather than a ray tracer. My original plan was to create a cube map for each point in space (represented as spheres in my project) that had color information about the N closest surfels surrounding the point.

Due to the fact that I was using an older version of OpenGL (not 3.30), I could not setup the 3D texture and get it to render the surfel information. My solution to this was to send a uniform color for the approximate color at that point from all directions, which was a very approximated color. This caused the color bleeding to be per object, instead of a more accurate calculation per fragment.

My future work would be to choose to ray trace rather than using the OpenGL rasterizer for this step in order to get the per fragment representation of color bleeding. Another solution would be to send six individual textures that act as a cube map and calculate the color based on the fragment's normal looking up into the correct texture. -

One goal of my goal for this project was to implement PBCB in both OpenGL and Metal. I wanted to first analyze which graphics library was better suited for this project. Another goal was to test performance of the render. Due to time restraint, I was not able to finish porting this OpenGL project to Metal. The difficulties I faced in porting the project were that matrix math and buffer setup are completely different. Metal uses a column major matrix representation, while OpenGL uses row major.

This caused some issues when porting, especially since Metal does not have matrix operations like perspective field of view or look at for setting up the persepective and view matrices. - Once the previous steps were complete, I would then make the application more interactive. This would include allowing the user to modify the number of surfels that will be used when approximating color bleeding. It would also allow for adjusting the number of surfels are created when raytracing the image (the preprocessing step would need to be repeated each time this changed).

References

- "Point-Based Global Illumination for Movie Production", Per H. Christensen (2010)